Software maintenance and modernization is a critical challenge for enterprises managing hundreds or thousands of repositories. Whether upgrading Java versions, migrating to new AWS SDKs, or modernizing frameworks, the scale of transformation work can be overwhelming. AWS Transform custom uses agentic AI to perform large-scale modernization of software, code, libraries, and frameworks to reduce technical debt. It handles diverse scenarios including language version upgrades, API and service migrations, framework upgrades and migrations, code refactoring, and organization-specific transformations. Through continual learning, the agent improves from every execution and developer feedback, delivering high-quality, repeatable transformations without requiring specialized automation expertise.

Organizations need to run transformations using AWS Transform custom concurrently across their entire code estate to meet aggressive modernization timelines and compliance deadlines. Running it at enterprise scale requires a solution to process repositories in parallel, in a controlled remote cloud environment, manage credentials securely, and provide visibility into transformation progress. Today, we’re introducing an open-source solution that brings production-grade scalability, reliability, and monitoring to AWS Transform custom. This infrastructure enables you to run transformations on thousands of repositories in parallel using AWS Batch and AWS Fargate, with REST API access for programmatic control and comprehensive Amazon CloudWatch monitoring.

AWS Transform custom provides powerful AI-driven code transformation capabilities through its CLI. To effectively scale transformations across enterprise codebases, organizations need:

Scale: Ability to run transformations on 1000+ repositories concurrently rather than one-by-one Infrastructure: Dedicated compute resources for long-running transformations beyond developers’ laptops API Access: REST API for programmatic orchestration and seamless integration with CI/CD pipelines Monitoring: Centralized visibility into transformation progress and status across multiple repositories Reliability: Automatic retries, secure credential management, and built-in fault tolerance

This solution provides complete, production-ready infrastructure that addresses these challenges:

The solution uses a serverless architecture built on AWS managed services:

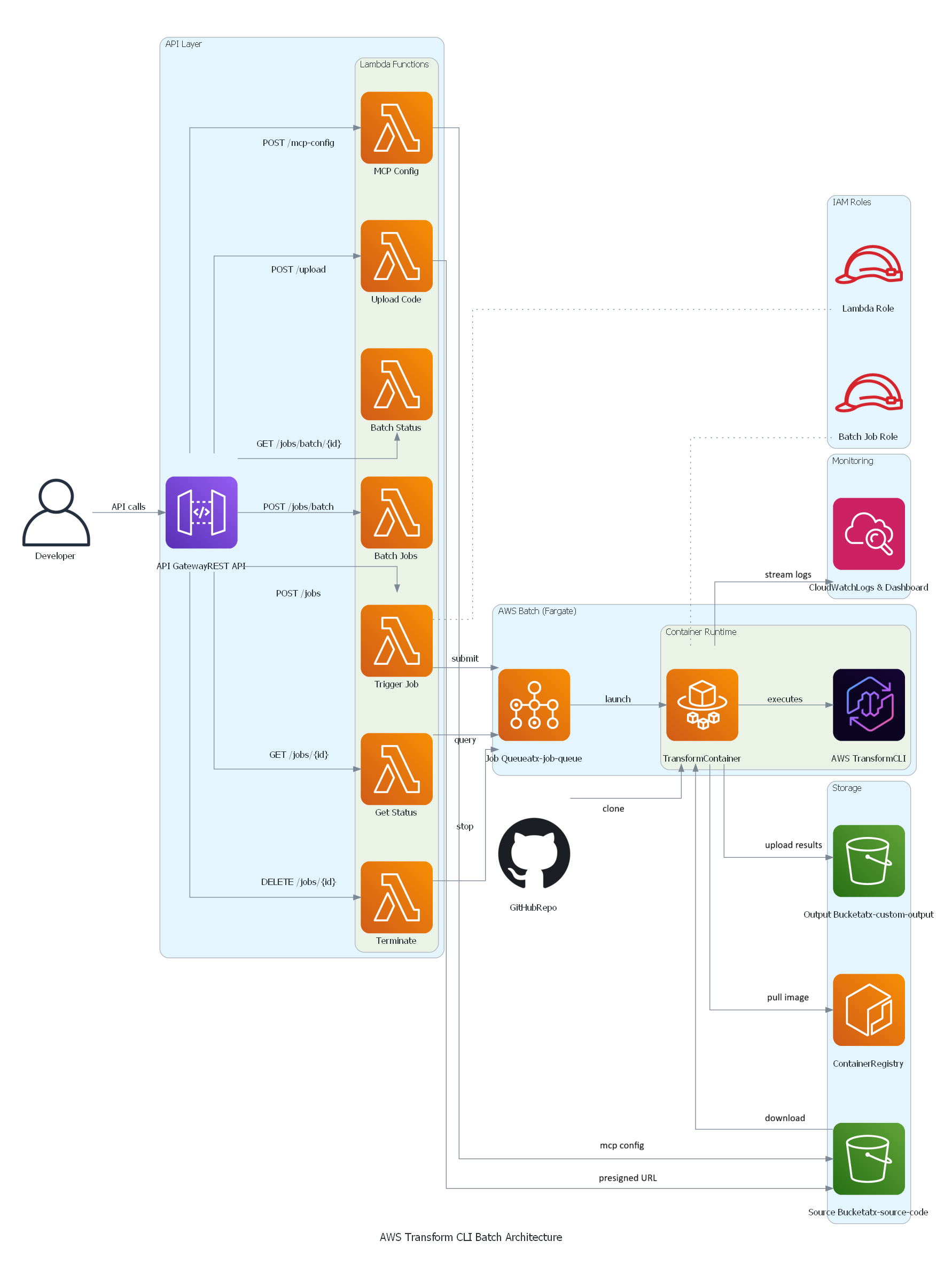

AWS Transform custom Batch solution architecture

Key Components:

Before deploying, ensure you have:

Step 1: Clone the Repository

Step 2: Set Environment Variables

Step 3: Verify prerequisites

This checks that Docker is installed and running, AWS CLI v2 is configured with credentials, Git is available, and your AWS account has the required VPC and public subnets.

Step 4: Set up IAM Permissions (Optional, but recommended)

Generate a least-privilege IAM policy instead of using broad permissions:

This creates iam-custom-policy.json with minimum permissions scoped to your specific resources.

Create and attach the policy:

Note: If you have administrator access, you can skip this step and proceed directly to deployment.

Step 5: Deploy with CDK (One Command Does Everything!)

Time: 20-25 minutes (all resources)

What CDK Does Automatically:

What Gets Deployed:

See cdk/README.md for detailed instructions and configuration options.

Step 6: Get Your API Endpoint

After deployment completes, retrieve the API endpoint URL:

This endpoint is used in all subsequent API calls.

If you prefer manual control over each deployment step or need to customize individual components, use the bash script deployment. See deployment/README.md for the complete 3-step process with detailed explanations of what each script deploys.

Quick test: Run cd ../test && ./test-apis.sh to validate all API endpoints (MCP, transformations, bulk jobs, campaigns).

Submit a Python version upgrade transformation:

This API call triggers a Python version upgrade transformation on the todoapilambda public git repository. The transformation uses the AWS Managed transformation to upgrade from the current Python version to Python 3.13. The configuration parameter specifies additional validation command to be run and plan context to specifies the location of python 3.13 installation in the container and the target version. The -x flag is for non-interactive mode of the transformation , and -t flag is to trust all tools for this transformation.

API returns a job ID for tracking. Job names are auto-generated from the source repository and transformation type.

See api/README.md for complete API documentation with examples for Java, Node.js, and other transformations.

Transform multiple repositories in a single API call:

This API call triggers a deep static analysis of the codebase to generate hierarchical, cross-referenced documentation for three open source repositories in parallel. The transformation uses the AWS Managed transformation to generate behavioral analysis, architectural documentation, and business intelligence extraction to create a comprehensive knowledge base organized for maximum usability and navigation.

The API submits these jobs in a async manner. i.e the API returns a batch id upon submitting these jobs to AWS Batch. Then you can monitor the progress as specified below.

See api/README.md for status checking, MCP configuration, and other API endpoints.

Check batch status:

Response shows real-time progress:

After a job completes, the results are stored in your S3 output bucket.

S3 Output Structure:

Results are organized by job name and conversation ID:

Validation Summary:

AWS Transform CLI generates a validation summary showing all changes made:

This file contains:

Download Results:

The solution includes a CloudWatch dashboard with operational metrics:

Job Tracking:

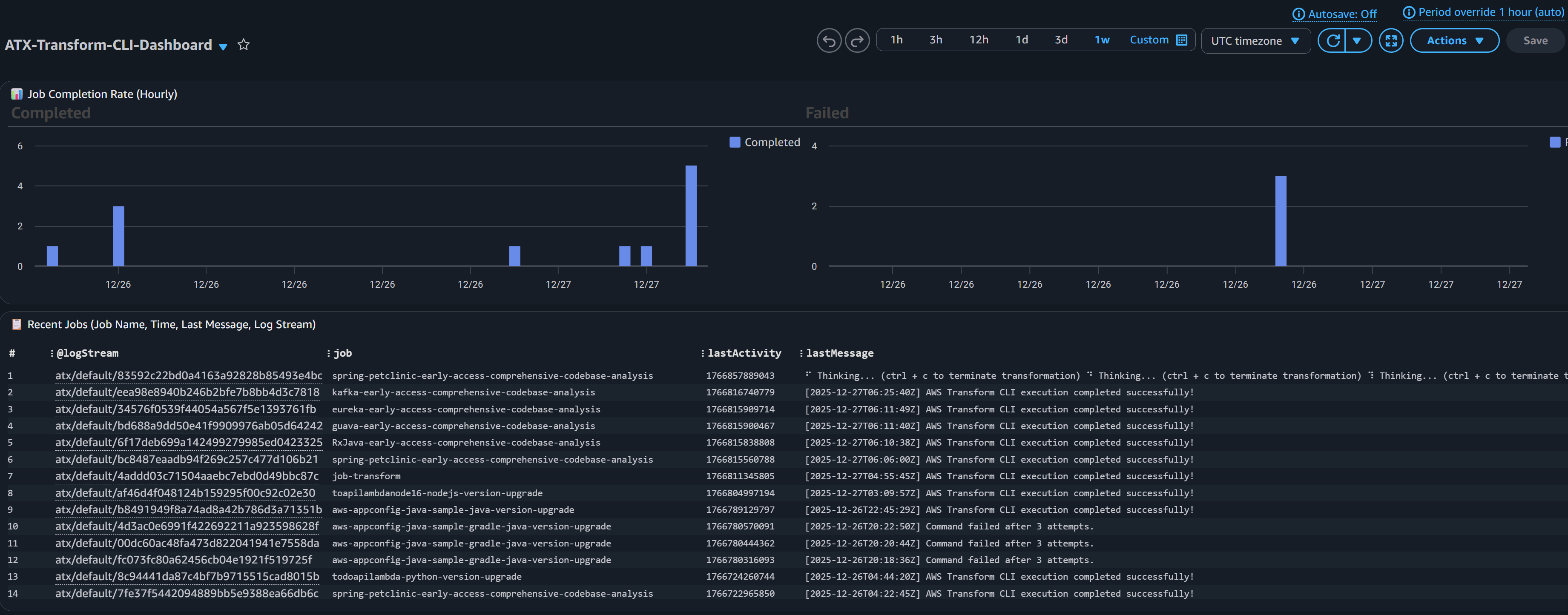

CloudWatch Dashboard screenshot for Job tracking

API and Lambda Health:

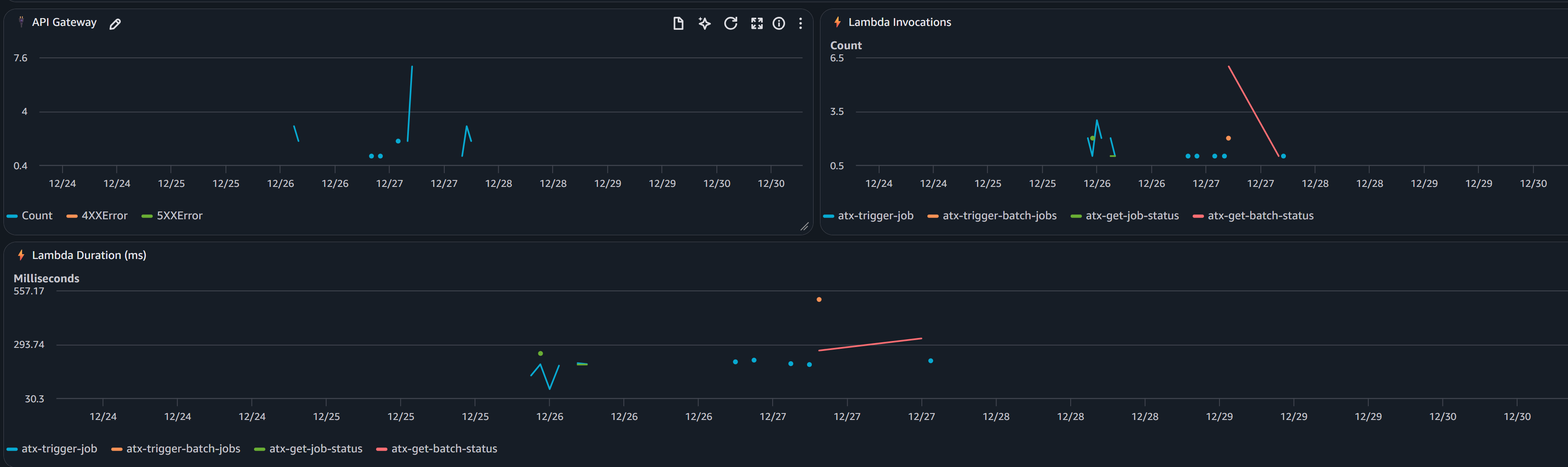

CloudWatch Dashboard screenshot for API and Lambda Health

CloudWatch Logs:

All logs are centralized in CloudWatch Logs (/aws/batch/atx-transform) with real-time streaming.

View logs via AWS CLI:

Or use the included utility:

View in AWS Console: CloudWatch → Log Groups → /aws/batch/atx-transform

AWS Transform custom supports Model Context Protocol (MCP) servers to extend the AI agent with additional tools. Configure MCP servers via API:

The configuration is stored in S3 and automatically available to all transformations. Test with atx mcp tools to list configured servers.

See api/README.md for status checking, MCP configuration, and other API endpoints.

You may need to access private repositories and artifact registries. Extend the base container to add credentials:

To access your private Git repositories or artifact registries during transformations:

Two approaches:

Steps:

See container/README.md for complete setup instructions, examples, and security best practices.

Redeploying after customization:

If using CDK:

CDK automatically detects Dockerfile changes and rebuilds. If changes aren’t detected, force rebuild:

If using bash scripts:

The infrastructure will use your custom container with private repository access. You can also customize the container to add support for additional language versions or entirely new languages based on their specific requirements.

See container/README.md for complete examples.

Note: For automated PR creation and pushing changes back to remote repositories after transformation, you have two options: (1) extend container/entrypoint.sh with git commands using your private credentials (see commented placeholder in the script), or (2) use a custom Transformation definition with MCP configured to connect to GitHub/GitLab for more sophisticated PR workflows.

Central platform teams can create campaigns through the AWS Transform web interface to manage enterprise-wide migration and modernization projects. For instance, to upgrade all repositories from Java 8 to Java 21, teams create a campaign with the Java upgrade transformation definition and target repository list. As developers execute transformations, repositories automatically register with the campaign, enabling you to track progress and monitor across your organization.

Creating a Campaign

Executing the Transformation in a Campaign

Once this transformation Job is successful, you can view the results and dashboard in Web application as well.

To remove all deployed resources:

This script deletes:

Enterprise software modernization requires infrastructure that can operate at scale with reliability and observability. This solution provides a production-ready platform for running AWS Transform custom transformations on thousands of repositories concurrently.

By combining AWS Batch’s scalability, Fargate’s serverless compute, and a REST API for programmatic access, you can:

The code repository is open-source, fully automated, and ready for you to deploy in your AWS account today.

Get started today with AWS Transform custom

Venugopalan Vasudevan (Venu) is a Senior Specialist Solutions Architect at AWS, where he leads Generative AI initiatives focused on Amazon Q Developer, Kiro, and AWS Transform. He helps customers adopt and scale AI-powered developer and modernization solutions to accelerate innovation and business outcomes.

Dinesh is a Enterprise Support Lead at AWS who specializes in supporting Independent Software Vendors (ISVs) on their cloud journey. With expertise in AWS Generative AI Services, he helps customers leverage Amazon Q Developer, Kiro, and AWS Transform to accelerate application development and modernization through AI-powered assistance.

Brent Everman is a Senior Technical Account Manager with AWS, based out of Pittsburgh. He has over 17 years of experience working with enterprise and startup customers. He is passionate about improving the software development experience and specializes in the AWS Next Generation Developer Experience services.